- STMicroelectronics Community

- STM32 MCUs Software development tools

- STM32CubeIDE (MCUs)

- Re: Hard Fault on function start - but cannot debu...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Hard Fault on function start - but cannot debug

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-07 01:36 PM - edited 2023-12-07 01:40 PM

I have a problem, but dont know, how to solve...

when this function ( mp3dec_decode_frame(..) ) is used, get hard fault. ok, but cannot debug , because no step into function is possible, hard fault is always next step.

Now the prerequisites : this function i used to decode MP3 in my H743 project, no problems.

then copied to this H563 project, but now using rtos Azure (because forced to use this by STM)…

and now : not working anymore, even cannot debug, what happens there, because first is : jump to hard fault.

So maybe the stack is in nirwana, but i cannot see (understand ARM assembler) anything, that leads me to solve the problem.

See , here is the debug at starting the function:

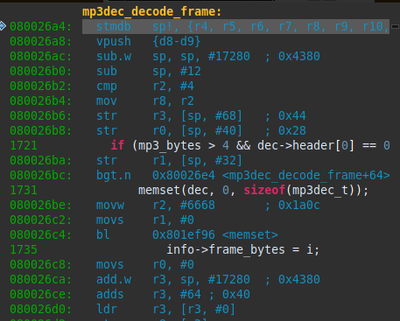

disassm shows here:

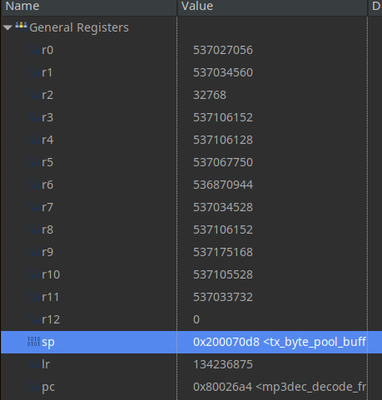

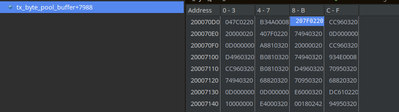

(i see no problem...) stack is in mem:

and this seem ok:

so: how to find the reason for this hard fault ?

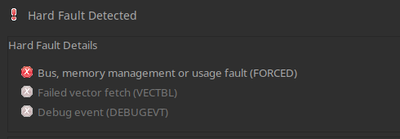

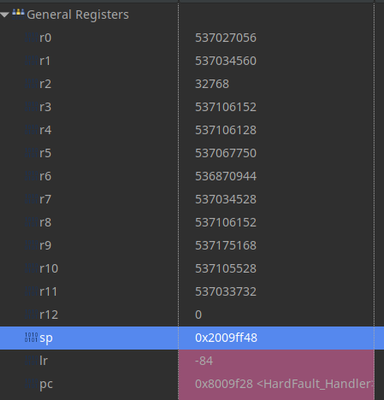

next step ...hard fault :

cpu then:

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-09 09:38 AM - edited 2023-12-09 09:38 AM

seems i got it.

just it makes me feel bad, if something happens, i cannot explain on "my cpu" - not acceptable.

so had the idea: maybe i am not the first to get this problem with the mp3-decoder, look at "issues" on git ;

and i found a comment:

so it seems to need much room on stack - i set the thread to 24KB stack, just to check.

then : no hard fault and ThreadX stack statistics show now (!) : about 23K stack used !

But still i am puzzled, why the hard fault can be at the call of the function, without any step to the function?

I tried using the same function call to test , whether there is a problem with the parameters/stack usage and this worked without any problem. So more stack is only needed by the decoding and tables (what i removed for this test), that are called in the analyzing and decoding steps.

How can it "know" at the call, that this will call other functions needing to much stack later then ?

and many thanks to all those who contributed their ideas.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-09 10:16 AM

Indeed even your initial code allocates ~17 KB of stack space in that function:

sub.w sp, sp, #17280 ; 0x4380

If it is not done directly in the code of that function, it is a result of function inlining done by compiler optimization.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-09 10:38 AM

@Piranha , your right . :)

but still i dont understand: on single step, who generates hard fault at the first instruction , where just some pointers are pushed to the stack ?

and even the stack gets too big, it just would "kill" some other memory (for usb stack..) - so how or who calls hard fault here? is it the rtos ?

now:

and threadX stack statistics:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-09 02:21 PM

You are stepping just one of the RTOS threads. As the SysTick is still running and it's interrupt is already pending, as soon as the CPU starts running, the RTOS kernel kicks in and switches your thread out. After that all active threads of a higher and same priority gets a chance to run until the RTOS comes back to your thread. To test this, you can disable all interrupts globally around that call and see what difference it makes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-09 02:51 PM - edited 2023-12-09 02:53 PM

ok, this seems possible. brillant idea. :)

i just didnt think about the possibility, a MS system doing real task switching.

I was working with OS9 , about 30 Y ago, a real multi-user multi-tasking system.

So after some simple tests with Azure/threadX i was thinking, this just a part of a useful rtos system.

Just one task, without $sleep stops all other tasks. nonsense.

But maybe, its better, than i thought.

Thx for your time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-09 04:18 PM

All of those are real multi-threading kernels. The "non-real" one you think about is the MS-DOS based Windows 1.0 .. 9x/ME, which ended two decades ago. Also Microsoft bought the ThreadX just a few years ago and they are already getting rid of it.

The basic scheduling principles of the most multi-threaded kernels are the same - preemptive context switching with round-robin processing within each priority. Therefore a single thread doesn't stop all other threads - the ones with a higher and the same priority continue running. And the threads should use a proper thread synchronization primitives, not a sleep function. The big desktop OSes employ some more complex weighted algorithms to also allow a throttled running of a lower priority threads, but that exactly the reason why those are not real-time OSes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-11 03:42 AM

> Also Microsoft bought the ThreadX just a few years ago and they are already getting rid of it.

What are they replacing it with? I thought ThreadX is now a part of AzureRTOS, are they ditching it too? I'm just curious, I made my own compatibility layer in C++ to make my business logic roughly compatible with FreeRTOS and AzureRTOS (I implemented some std API with configurable OS specifics). I'm curious what comes next.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-11 04:04 AM - edited 2023-12-23 02:34 AM

https://www.embedded.com/azure-rtos-goes-open-source-as-eclipse-threadx/

see:

btw

TreadX can use time-slice/task switching - just as initial setting is, it dont do it.

Thats why in first simple test it didnt work.

I had to read first how to set it:

https://learn.microsoft.com/en-us/azure/rtos/threadx/chapter4#tx_thread_create

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-11 04:53 AM

Thanks, I like that news, because the more I use Azure RTOS the more I like it. And M$ provided pretty good documentation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-12-13 04:11 AM

Hey, have you made it? Meaning solving the FILEX hard fault problems?

Because I got into them recently. Hard faults all the way. Weird, because my simple test passed on all devices, so I made a more elaborate test that created, renamed and deleted more files, also longer transfers and it started to crash and misbehave. What I figured out it's not (currently) related to a specific media, I get similar issues on both USB and SD cards. So I create file, read and write. It seems to work. But I do more file operations and it crashes or misbehaves. Recent weird behavior - all works except deleting files. It worked before.

What I noticed about THREADX - it's super sensitive about the memory. They don't say how big the memory pools should be, nor the thread stacks. However - tuning the pools and stack for correct threads made the hard faults go away. Yet I'm not entirely sure it's totally fixed, without MPU used the middleware can still overwrite things, it just doesn't crash the app on spot. If anyone asks - USB host thread for MSC needs 64KB of the pool and 44KB for the stack. However, the actual thread that uses the file system needs probably bigger stack. I moved all file operations to my main application thread (I mean RTOS thread, not the initial thread). And I allocated all available memory to it, to not have to worry about it anymore. I took me like 48 hours to figure out all middlewares minimal configurations. If you use STM32U5x9 - I can share my memory map for it. It might be not perfect, but it seems to work and leaves enough RAM for a big app with a GUI.

As we loosely discuss the quality of this stuff - it's not the best design when it crashes instead just returning a civilized error code. But I remember having crashes with FATFS too. There's one more thing: the worst bug ever in FATFS. When you open a file, you provide a file handle to it. When it's not ZEROED - the FATFS will not crash, it will start to express various undefined behaviors, a real hell to debug, diagnose, test and fix. Well, FILEX seem to initialize the file handles properly. Yet I zero them to be extra sure to avoid mines ;)

The worst thing when coding in C and C++ is to assume things. Like the memory is zeroed, or the index is within range, the pointer is not dangling and so on... Our favorite FS tools have a lot of such assumptions in them, unfortunately.