- STMicroelectronics Community

- STM32 MCUs

- STM32 MCUs Products

- Clock cycle shift on GPIO output STM32F103

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Clock cycle shift on GPIO output STM32F103

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-11 9:49 PM

Dear Community,

I am porting an old application made on AVR to STM32, and I am facing a strange timing issue.

In a nutshell, the application is reading sector (512 Bytes) from a SDCARD and output the content of the buffer to GPIO with 4us cycle (meaning 3us low, 1 us data signal).

The SDCard read is working fine, and I have written a small assembly code to output GPIO signal with precise MCU cycle counting.

Using DWT on the debugger, it give a very stable and precise counting (288 cycles for a total of 4us).

When using a Logic analyser with 24 MHz freq, I can see shift of signal by 1 or 2 cpu cycles and so delay.

I have tried to use ODR directly and BSRR but with no luck.

Attached :

- Screenshot of the logic analyzer

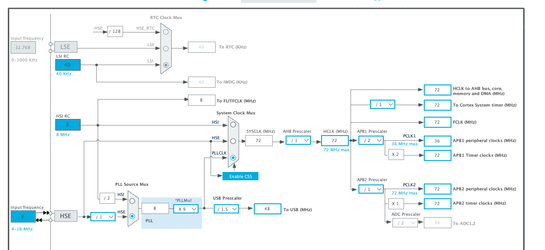

Clock configuration

Port configuration:

I do not know where to look at to be honnest

- Labels:

-

STM32F1 Series

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-11 11:32 PM

Even something as “lowly” as stm32f1 does not guarantee cycle-by-cycle timing accuracy. Where there are things like FLASH accelarators and multiple clocks in a system, precise timing becomes unpredictable, particularly if you have other things going on such as DMA or interrupts.

(I think stm32f0 data sheets, in contrast, do mention cycle-by-cycle predictability).

You could reduce the number of things “going on” by putting your delay code inline rather than as a subroutine call - this will avoid delay-unpredictability associated with the jump and push/pull of PC to/from the stack.

How certain are you of the delay error? Are you using a Is it consistent or only on some cycles? I see you are using HSE but is it a crystal or a lower-accuracy ceramic resonator?

You do not go into why timing is so critical for your application. But if it is, you might be better off using DMA driven by a timer to pump your pre-processed pattern to the BSRR register.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 4:51 AM

Thanks Danish1,

Timing is critical because I am interfacing an Old Apple II SDISK with a STM32 to simulate the floppy disk drive. The protocol used is a very specific data transfer protocol without clk pulse but only sequence of 1us data signal, 3us pause and so on for 402 encoded bytes (256 with encoding). I really need something very accurate. It works like a charm on a AVR ATMEGA328P.

I am new to STM32, and very surprise by the unpredictable clock approach.

I have tried the inline of the wait procedure, it helps a bit but it is not yet really accurate.

I will try the DMA approach (even if I know nothing on ARM DMA with timer), would you have an example where I can start learning how it works ?

Vincent

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 5:48 AM

I have checked how DMA works does it mean that I have to convert each Byte to an array of 8 uint32 and to convert to match BSSR ?

so for 402 Bytes I need a 8x402 of uint array right to feed the DMA ?

If I used circular buffer, how do I feed the buffer ?

How do I manage 1us data pulse and 3 us pause ? another timer ? in that case how to sync the 2 timer ?

Sorry for all this newbie question

Thanks

Vincent

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 6:23 AM

If your data pins are all on one port you could use a 4x402 (zeroed first!) buffer, write your encoded data in every 4th location, and use DMA metered by a 1 uSec timer to blow out the entire buffer. Or maybe I missed a detail... ;)

Welcome to the STM32. There are probably some Application Notes that can help with DMA and timer setup. And there may be something in the CubeMX examples for the F103 that might be helpful - worth a look.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 7:06 AM

Hello David,

thanks for your answer, I discover the power of the STM32 and I like it ;)

Very good idea to have 1 data at every 4th location, I have only 1 data pin, and it means that I need 4x8(bit)x402(Bytes) ?

Is there a way to recharge the buffer to be sent using circular ? if it make sense, how do I detect the DMA position ?

Vincent

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 7:15 AM

You can set the DMA to circular mode, 2 X size of your array with data, then use the half and full buffer callback to fill in new data.

So DMA write continuous data stream without interruption and you have time to fill the next buffer it will send.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 7:24 AM

I see that there is a half transfer interrupt that I would use to update the first half of the buffer ? would that work ?

Vincent

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 7:52 AM

The GPIO ports are 16-bit, right? The DMA writes to the entire port's ODR (all 16 bits in a single write cycle), so your buffer depth is 4x2(bytes)x402(bytes) (and should be at least 16-bit aligned). The catch with this approach is the DMA is writing to the entire port so any pin that's configured as a GPIO output (including any control signals at your hardware interface that are assigned to a pin on that port) will get the corresponding bit values from the buffer. Wait...is your hardware interface 1 or 8 bits wide (for the data)? Are there control signals too? (I assumed it's 8 bits, hence the ODR suggestion. If it's only 1 bit the buffer depth increases x8.) Sorry, I'm not familiar with the details of the interface you're synthesizing and that directly affects the DMA buffer's content.

(I'm not familiar with the F103's DMA so my suggestion might be totally inappropriate.) If I wanted to be fancy I'd set the DMA up as circular over a 2x buffer and use the half-complete and complete interrupts to know in which half I could scribble while the DMA was blowing out the other half.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-12 2:15 PM - edited 2024-05-12 4:17 PM

Ah, now I see (a little) more! You're interfacing with this:

https://www.bigmessowires.com/2021/11/12/the-amazing-disk-ii-controller-card/

and

https://embeddedmicro.weebly.com/apple-2iie.html

...right?

If so, maybe instead of bit-banging it with a GPIO and DMA+timer you could use either a USART in synchronous or SPI. Just a thought... ;)

- STM32F407G-DISC1 built-in microphone settings. in STM32 MCUs Embedded software

- STM32H7 vs STM32G4 DDS generation through external DAC AD5640 14-bit DAC w/ SPI in Others: STM32 MCUs related

- PWM input output drift in STM32 MCUs Products

- Motor Control Workbench Position Control: Proper way to reset theta. in STM32 MCUs Motor control

- SSI Slave on a STM32H743 via SPI in STM32 MCUs Products