- STMicroelectronics Community

- STM32 MCUs

- STM32 MCUs Products

- Debug ST-Link MINIE using the ADC , GP DMA and TIM...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Debug ST-Link MINIE using the ADC , GP DMA and TIM strange behaviour

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-03-28 4:28 AM - edited 2024-06-28 5:00 AM

Hi to everyone!

I'm using STM32U535: without HAL, with TIM1, ADC1 and GPDMA1_Channel1.

TIM1 regularly (every 312.5us) triggers the ADC1 (MSIK=16MHz,SMP=0b011=20ADC_ClockCycle, 14bits) which performs a sequence of 12 channels with oversampling 4x and without any shift. ADC1 triggers the GPDMA1_Channel1 to move the "ADC->DR" into "int32_t iCampioncini[12]" and when GPDMA1 has completed the twelve data transfer it rises the interrupt request handled by GPDMA1_Channel1_IRQHandler(void){...}

All works really fine.

TIM1 trigger has even a interrupt that rises and falls a pin (oscilloscope green)

GPDMA1_Channel1_IRQHandler rises and falls a pin (oscilloscope yellow)

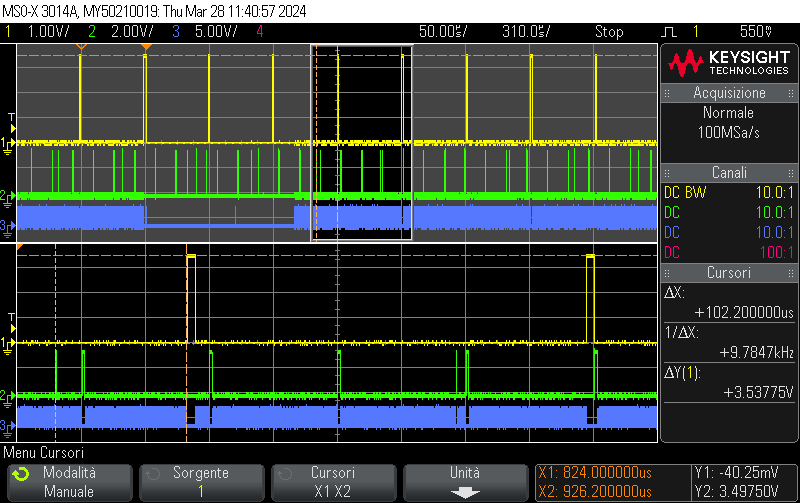

Time interval between green and yellow tracks on oscilloscope is very good = (17+20) / 16MHz * 12smpl * 4ovs = 111us = time between the start of conversion sequence from timer and the transfer complete, of 12 samples, of the DMA

All works really fine...but

If I use the debuger, for example enabling or disabling a break point in the first instruction of main() (which never hit during the while(1) cicle) something strange happens. When I terminate the debugger (ctrl+F2) the same strange behaviour. On power on without debugger device connected all works fine.

Here is the strange behaviour:

1 - The measures form ADC almost accurately halves

2 - The time between oscilloscope green and yellow tracks change every debug comunication (for example enabling and disabling the previous mentioned breakpoint, which never hit). Add theese are the oscilloscope time lapses sequence i registered between the two tracks: 111us,102us,19us,65us,74us,83us,19us,102us,74us,102us,65us,102us,65us,102us,102us,102us,46us,111us....and it goes on ramdomly.

Theese intervals however seem to be displaced every about more or less 9us apart and when the interval is randomly back to 111us the ADC value is no more halved but is correct.

3 - The registers of ADC1, GPDMA1_Channel1, TIM1 result unchanged in every check.

4 - When in the debugger I reset the chip and restart all works fine back, but my debug opportunities are deeply limited, cause for example I can't insert a beakpoint without influence the measures!

Have someone an idea to solve this problems?

Where I am wrong?

THE PICTURE ABOVE: yellow=dma interrupt handle every 12 samples sequence transfer complete; blue=toggle when idle in the while(1) loop; green_1=every 100us interrupt from TIM2; green_2= TIM1 triggers (every 312.5us) the ADC for a sequence. In this example picture please note cursors DeltaX=102us.

-----------------------------------------------------------------------------------------------------------------------------------------

Here are some additional information

ST-Link MINIE just updated

STM32CubeIDE 1.15.0 just updated

void main(void){

__disable_irq();//BREAKPOINT DISABLED

BspRCC_Init(); //SYSCLK=160MHz from MSIS=16MHz due to PLL1

Analog_Init(); //Init TIM1, ADC1, GPDMA1_Channel1

__enable_irq();

while(1){

//rise and fall of pin blue oscilloscope

}

}//void main(void){

- Labels:

-

Bug-report

-

STM32CubeIDE

-

STM32U5 series

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-05-02 2:22 AM

I'll try to simplify the description of my problem.

Can I change the behavior of the following program by simply insert, enabling and/or disabling (in the STM32CubeIDE) the breakpoint when the execution is already in the infinite while(1) loop? Please note that the breakpoint never reaches!

Can this simple SWD communication interfere with the results of the ADC1, GPDMA1, TIM1 ?

Please note that without SWD communication all work really fine!

Is there anyone experimenting similar phenomena?

int main(void) {

instruction; // > > > b r e a k p o i n t h e r e < < <

SomePeripheral_Init(); // GPDMA1, TIM1, ADC1

while(1){

}

}

void SomePeripheral_interrupt_Handler(void){

intructions;

}

I'll be grateful for any help. I suggested the migrating from Texas Instruments to stm32 in my company and it will be really unpleasant for me if...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2024-07-01 8:25 AM

Bad news.

Enabling and disabling a breakpoint, even if never hit, introduce a change in the behaviuor of the MCU.

I tested it even with different debuggers (STLINK V2 and STLINK V3 minie) and with different MCU (STM32U535 and STM32F103).

Maybe it's a standard and it's my ignorance. Maybe all, but me, did know it. However in other MCU (not from ST) the hardware breakpoints are not invasive or at least not so invasive.

- STM32G4 HRTIM V2.0 possible regression 6.16.1 -> 6.17.0 in STM32 MCUs Embedded software

- STM32F303CBT6 - Board only visible to IDE when probing SWCLK to GND with multimeter in STM32 MCUs Products

- Strangely accurate ADC noise in STM32 MCUs Products

- Regarding the use of DMA to transfer ADC data in STM32CubeIDE (MCUs)

- STM32G474 pin PB4 stays high for 27ms approx, After reset and before making it low as GPIO in STM32 MCUs Products