- STMicroelectronics Community

- STM32 MCUs

- STM32 MCUs Products

- STM32F37x SDADC data count offset change

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

STM32F37x SDADC data count offset change

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2013-03-25 10:25 AM

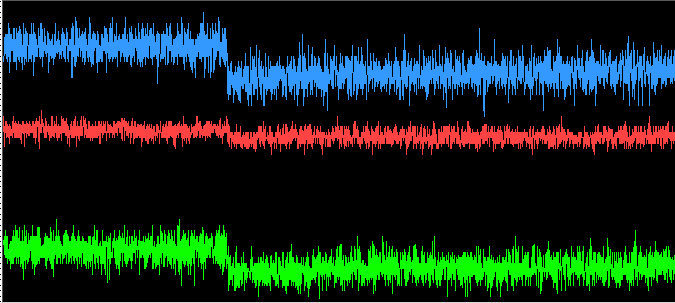

I've been working with the SDADC hardware on the stm32f37x. Using a sdadc clock of 6 MHz and software triggering 3 injected channels in single-ended zero-reference mode every millisecond. I've noticed that when applying DC inputs to the three channels in question I see a periodic 'shift' in the counts output downward by around 10 counts and slowly drift back upward to the previous level on average, this shift appears to be temperature dependent as applying a heat gun to the PCB at a distance the frequency of the 'shift' behavior increases. Has anyone else seen this behavior? Could this be a function of the underlying SDADC hardware implementation? When monitoring the inputs with an oscilloscope I do not see the shift which the SDADC is reporting.

I welcome any ideas or comments and I'm including a screen-shot of the plotted data around the time of the 'shift' which occurs on counts reported for all channels. #stm32f37x #sdadc #sdadc

#stm32f37x #sdadc #sdadc

- Labels:

-

SDADC

-

STM32F3 Series

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2013-03-26 7:40 AM

Two guesses:

(a) The peripheral is automatically applying a temperature calibration correction periodically. I don't see any mention of such in the data sheet or reference manual. (b) The Sigma Delta logic is applying a correction to bit flips in it's front end comparator. The 10 bit counts is within data sheet offset spec for single ended inputs. Perhaps STOne-32 could shed some light on this. The time between offset corrections at room temperature might be a good clue. Cheers, Hal- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2013-03-26 8:49 AM

Hi Hal,

Thanks kindly for your thoughts! To answer your statement: ''The time between offset corrections at room temperature might be a good clue.'' What I'm seeing at room temperature is something in the neighborhood of 25 seconds between shifts. Temperature compensation seems like a possible cause, but as you mentioned there's not much in the documentation either way.- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2013-10-23 12:49 AM

Were you able to solve this problem in the end?

I'm having the exact same problem. The input is in differential mode, and sampling at 12Khz. 120 samples are added together in a signed integer, and send to the PC. The reading is very stable because of this averaging, but I also see the periodic shifts which are mentioned above.- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2014-02-03 11:38 PM

Hi All

Does any body have a solution or a confirmation from ST about this issue? It seems that we observed the same behaviour of the SDADC. Thanks in advance Raphael- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2014-03-05 5:09 AM

Hi all,

I personally not see this problem on my device. Can you please write in which exact configuration is your SDADC used:- internal or external reference voltage (what is VREFSD value)- is supply voltage for given SDADC VDDSD common also for VDD on the PCB- it is observed in single mode or also differential - which exact- which gain is used- ... more details about PCB design - was designed correctly the supply paths and decouplingIt seems (my hypothesis) that the problem probably comes from the internal voltage regulator working principle (1.8V regulator for CPU core and peripherals). The internal regulator has specific regulation principle which causes that the output 1.8V supply timeline has sawtooth like shape with period from 10 seconds up to 2 minutes (period depends from ambient temperature). This period correspond to your observed ~25 seconds at room temperature. So - if the core 1.8V voltage has this sawtooth shape variations then the current consumed from this voltage also varies with similar sawtooth shape. If there is not correctly designed the PCB then this can cause voltage drop on supply voltages or on reference voltages - depends from the schematic design (source for reference voltages) and PCB design (voltage drops on supply wires). Please check above things and give me please information to reproduce your problem. Regards Igor- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2014-08-19 11:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2014-12-03 8:18 AM

Hi Julian,

we have a similar effect. Is there any progress on your site? I am open to pay a bottle of wine for it:)- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2018-08-07 10:43 PM

I have the same problem. Is there a workaround for this behaviour?

- Issue Interfacing with AP memory. in STM32 MCUs Products

- External NOR Flash Read address line instead of data in STM32 MCUs Embedded software

- STM32 "dual regular simultaneous mode only" - with DMA in STM32 MCUs Embedded software

- FDCAN RAM allocation on STM32G4 series in STM32 MCUs Products

- Support for 32-bit index for scroller widgets (ScrollList, ScrollWheel etc) in STM32 MCUs TouchGFX and GUI