Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- STMicroelectronics Community

- Missing nodes

- missing-articles

- How to use a six axis MEMS device and build advanc...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to use a six axis MEMS device and build advanced applications while processing in-the-edge

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2023-03-03 12:04 AM

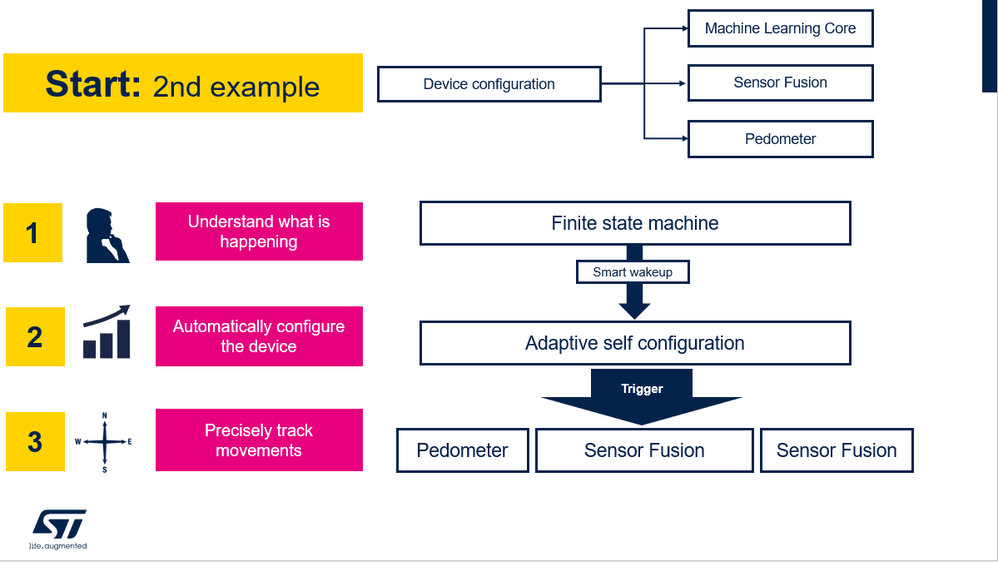

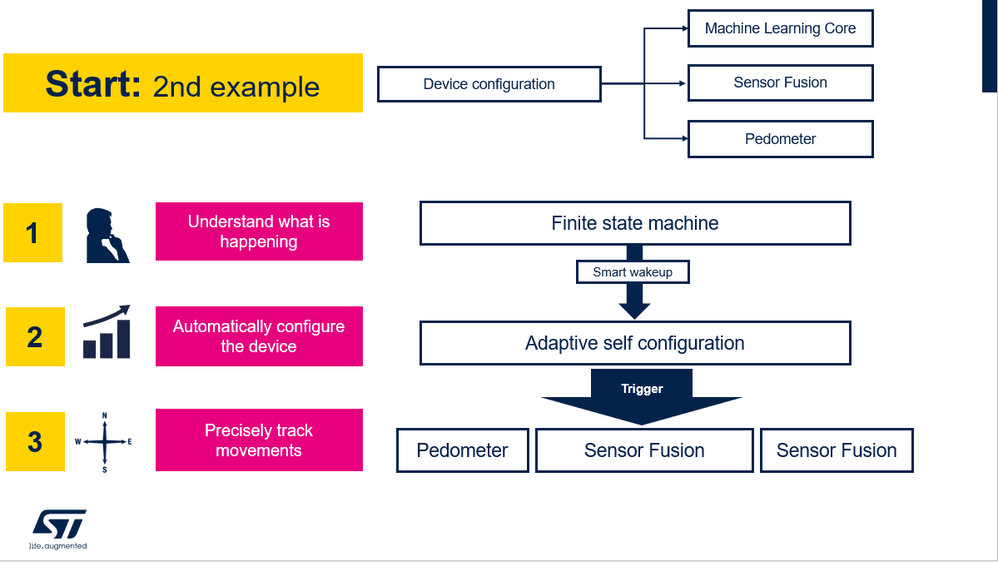

In this knowledge article, we show how you can use the edge functionalities of the LSM6DSV16X leveraging our Unico-GUI software tool to obtain a sequence of smart wakeup, device reconfiguration and finally performing the spatial motion tracking, the context recognition with a machine learning algorithm and the step counting with a pedometer.

For more hardware details, visit:

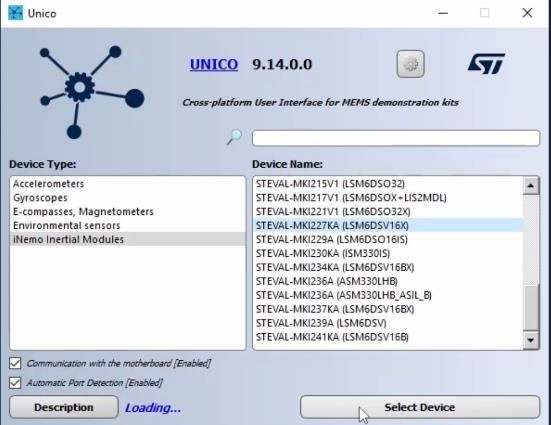

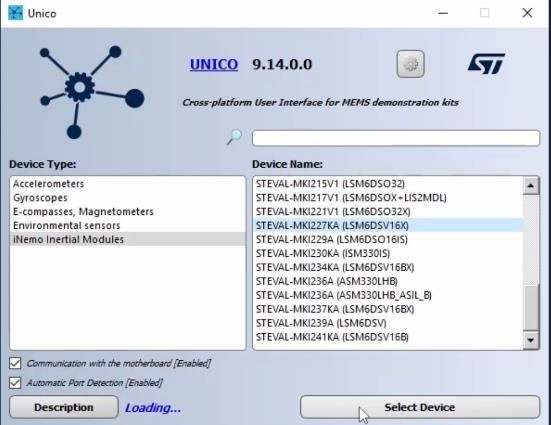

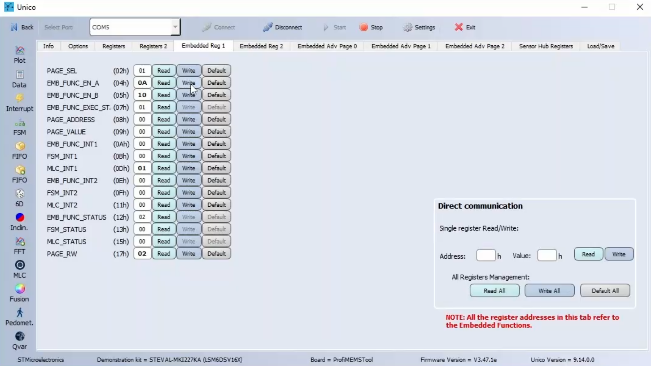

After opening Unico-GUI it is needed to select the device LSM6DSV16X with its adapter board, the STEVAL-MKI227KA.

Once pressed on “Select Device” button, it is possible to see on the left hand side all the in the edge functionalities of the device: FSM, MLC, Pedometer, Sensor Fusion, Qvar.

Clicking on the Start button on the top bar we are enabling the communication between the STEVAL-MKI109V3 with the STEVAL-MKI227KA and our PC.

To know more about the sensor fusion please refer to this knowledge article.

More specifically, the configuration was the one for the gym activity recognition for the right hand.

At this link you can find the prebuilt configuration: https://github.com/STMicroelectronics/STMems_Machine_Learning_Core/tree/master/application_examples/lsm6dsv16x/gym_activity_recognition

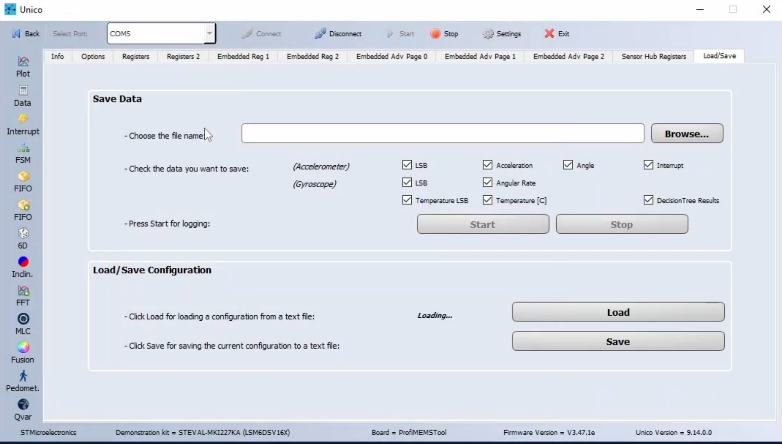

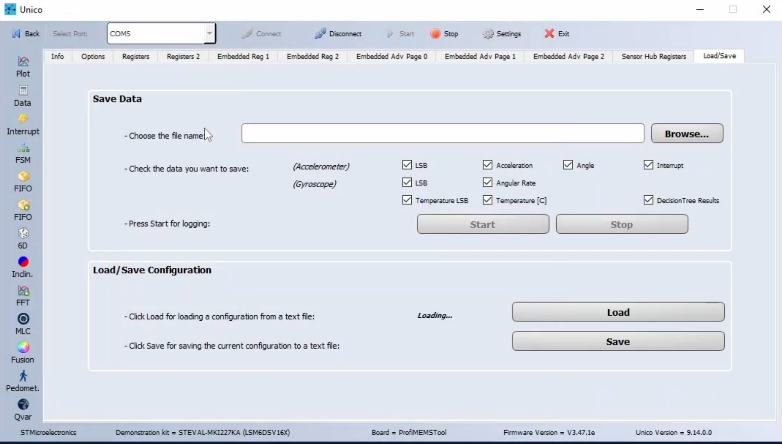

Once downloaded the UCF file, on Unico-GUI it is needed to select the load/save bar and load the .ucf configuration.

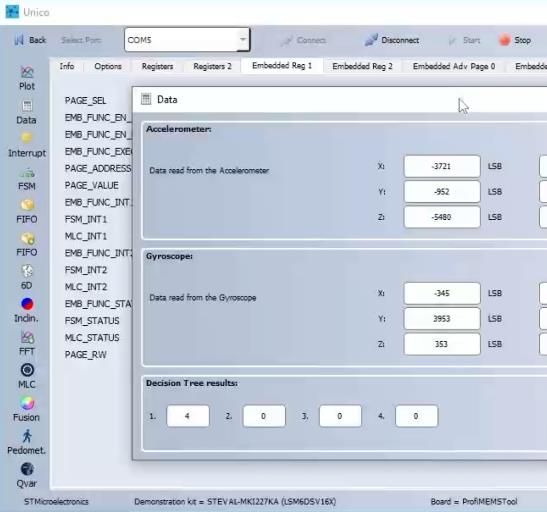

The selected configuration shows the MLC output as follow and as they are explained in the readme file in GitHub:

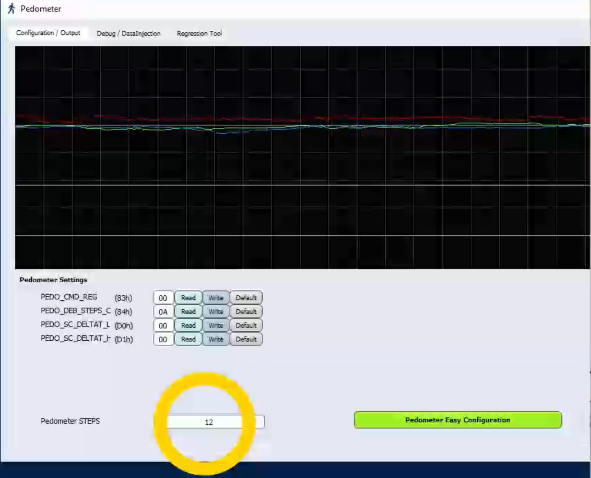

It is possible to immediately check the pedometer output by clicking on the pedometer button. Going back to the Unico-GUI main page and selecting on options, it is possible to see the data rate and scale of both accelerometer and gyroscope, in this case it is set as:

Going back to the Unico-GUI main page and selecting on options, it is possible to see the data rate and scale of both accelerometer and gyroscope, in this case it is set as:

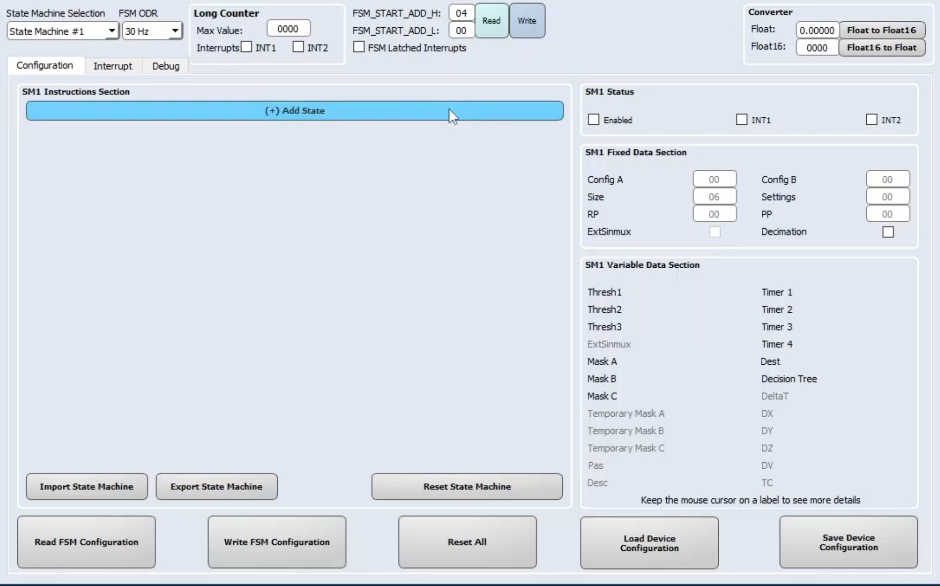

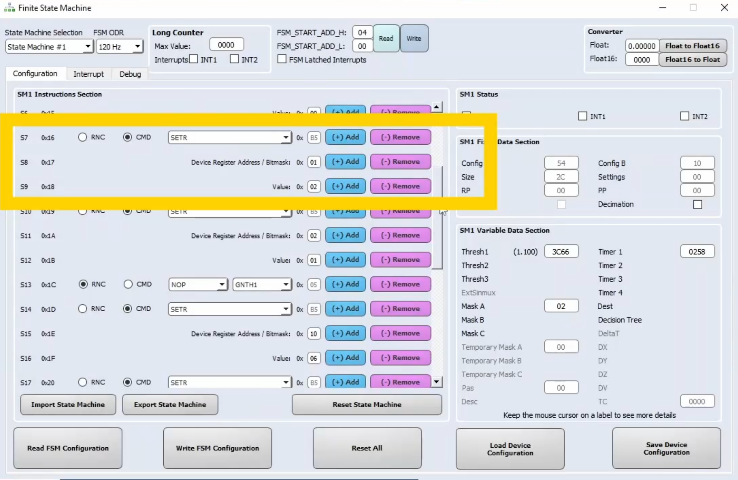

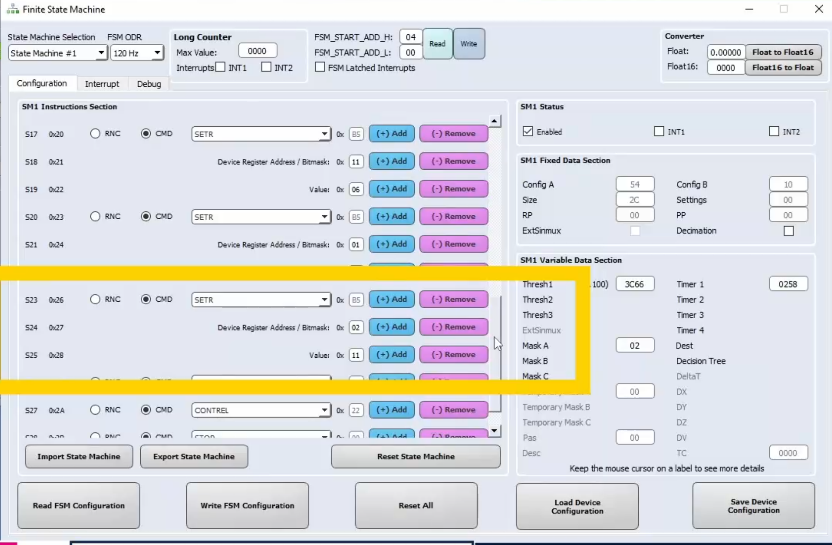

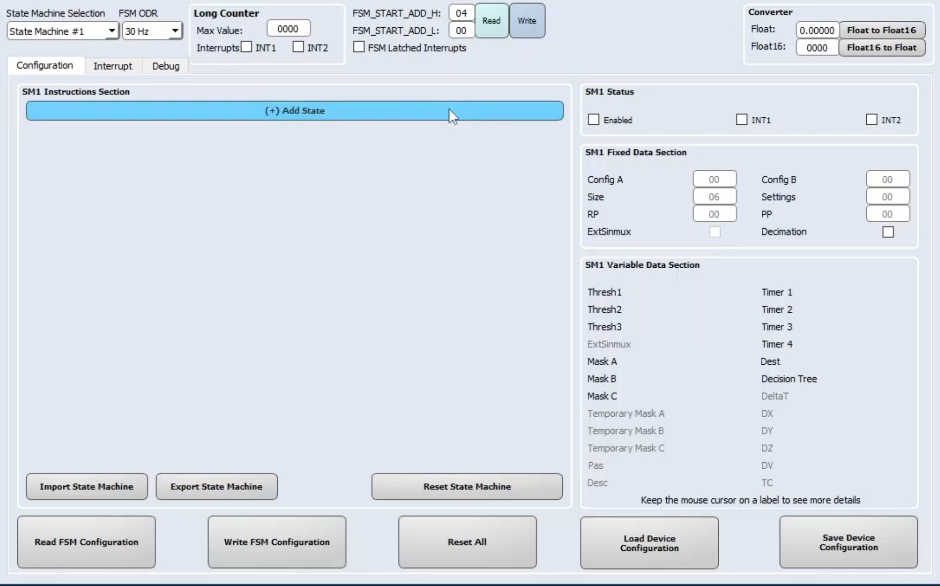

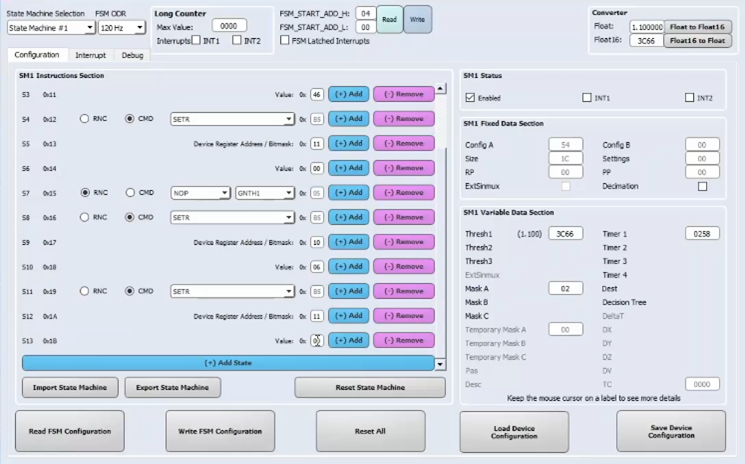

After clicking on the FSM button, the FSM screen will open.

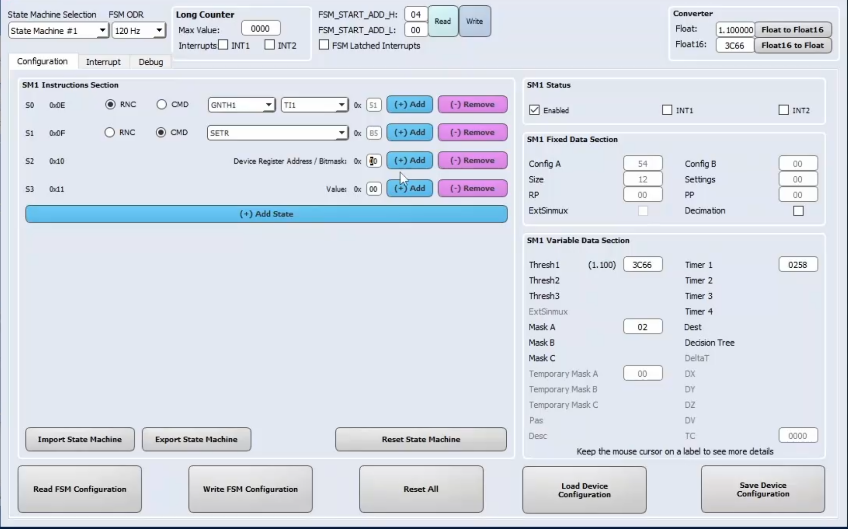

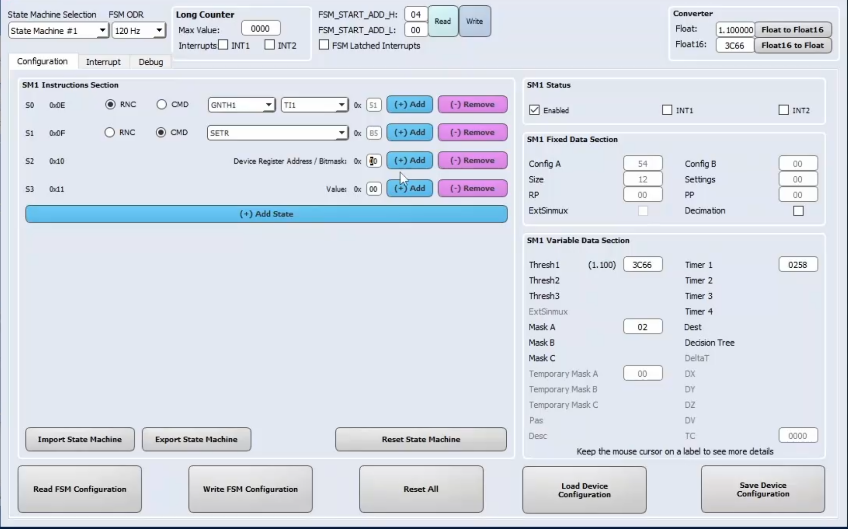

The first step is to align the FSM ODR to the sensors ODR, which is 120 Hz.

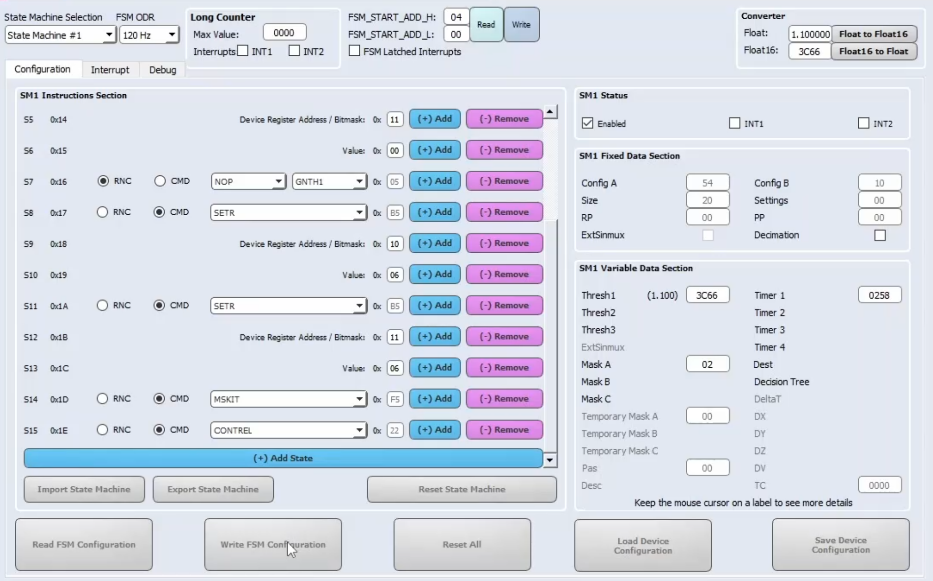

Now, we can start building the program. Since the device starts in a wake state, where accelerometer and gyroscope sensors are in high-performance mode, we need the device to enter in a sleep state, meaning that only the accelerometer is kept on in low-power mode. The sleep state is enabled when the device is kept stationary for a while, for example 5 seconds.

During movements, the accelerometer and gyroscope sensors are configured at 120 Hz in high-performance mode. During this condition, the MLC, the pedometer 2.0 and the SFLP are fully functional. The sensor provides Gym Activity Recognition output, the number of steps and the orientation of the device.

To enter the sleep state, the first instruction of the FSM will be a GNTH1 | TI1 condition. The configured threshold is 1.1 g applied on the accelerometer norm signal, and the timer is 600 samples (which means 5 seconds at 120 Hz). Anyway, you can refer to the AN5882 for getting more details about the FSM conditions.

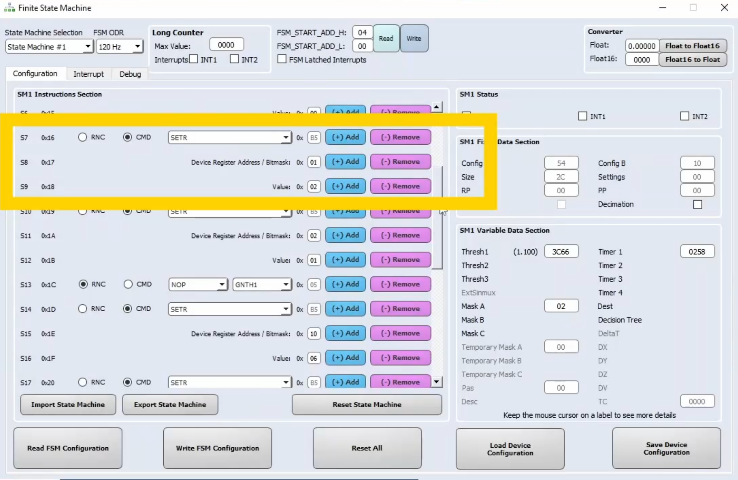

The next instruction will be reached when the device is stationary for 5 seconds. When it happens, we want to turn off the gyroscope and to set the accelerometer in low-power mode. For this purpose, four consecutive SETR commands are issued, one for changing the accelerometer power mode, and one for turning off the gyroscope.

Another SETR command is used to disable the pedometer during the stationarity. The SETR command takes as first bit the argument the value 01, which means register embedded function enable A and as second argument the bite 02, which means that the pedometer is disabled while the sensor fusion is kept on.

The fourth SETR command is used to disable the MLC during stationarity. The first argument is the bite 02 and the second argument is the bite 01 which means that the MLC is disabled while the sensor fusion is kept on.

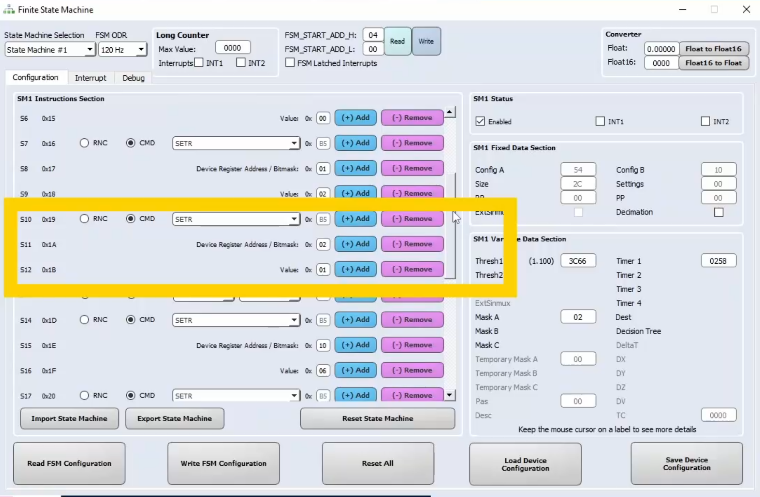

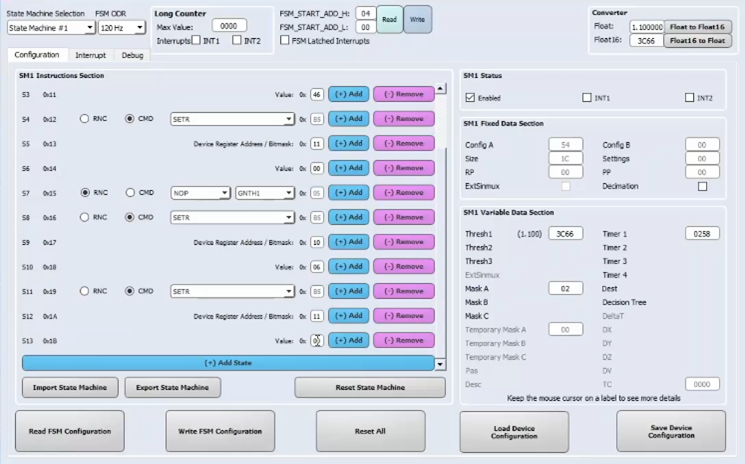

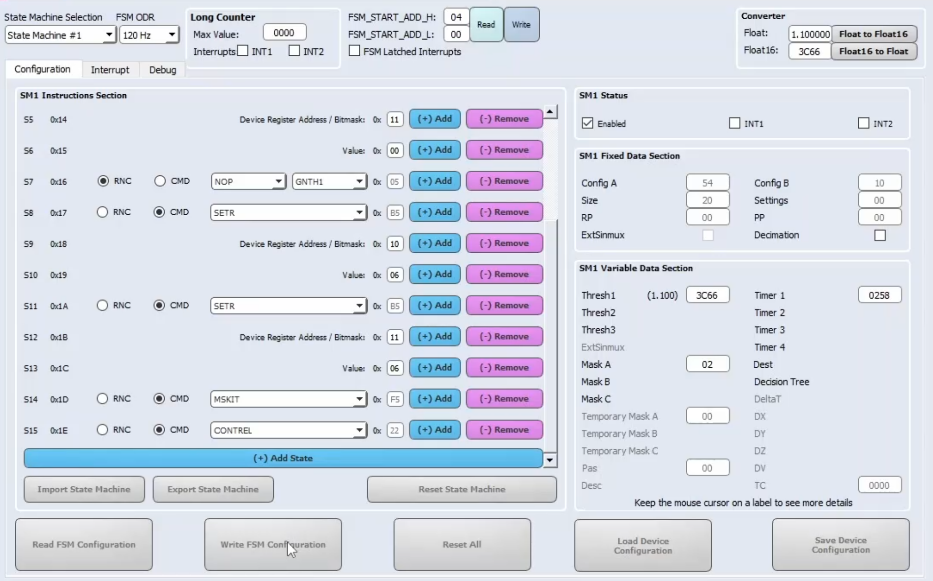

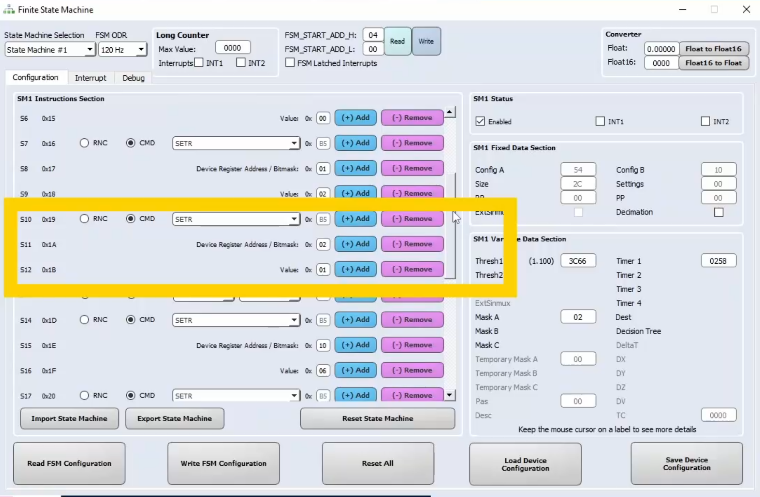

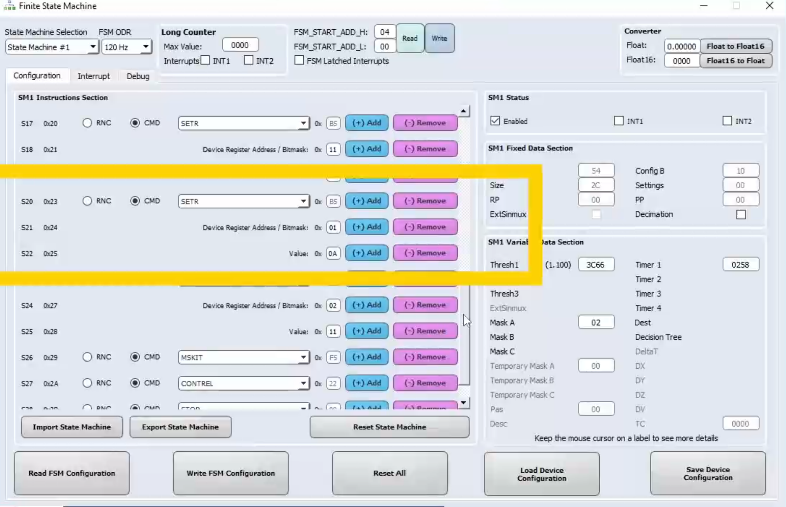

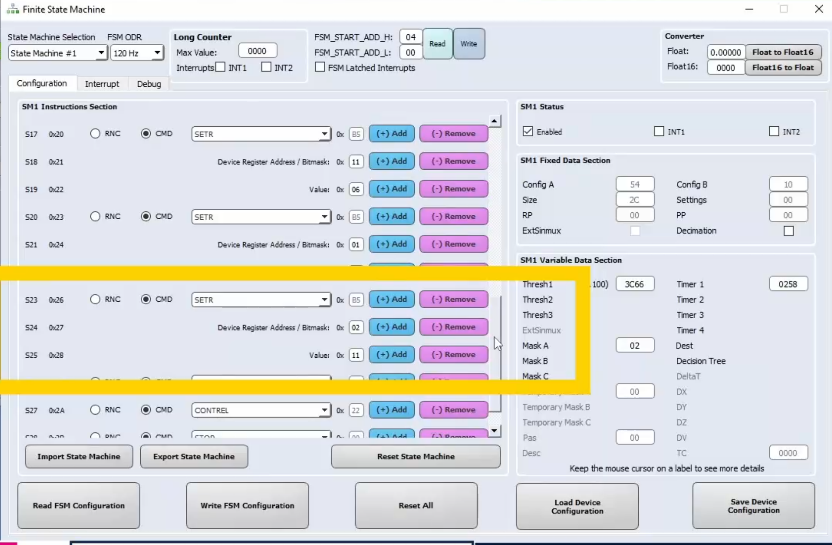

Then, we need to configure a wake-up able to set the accelerometer and gyroscope sensors back to 120 Hz in high-performance mode. In this case, the wake-up condition is a NOP | GNTH1, using the same threshold used before. Once the wake-up signal is detected, we need to configure other four consecutives SETR commands to change again the sensors configuration.

The first and the second one are needed to set the accelerometer and gyroscope back to 120Hz in high performance mode.

The third SETR command is used to enable the pedometer when the wake-up is detected, the first argument is the bite 01 and the second is the bite 0A, which means that the pedometer is wakened up together with the sensor fusion.

Finally, the last SETR command is used to enable the MLC after a smart wake-up is detected.

The first argument is bite 02 and the second is bite 11, which means that the MLC is enabled together with the sensor fusion.

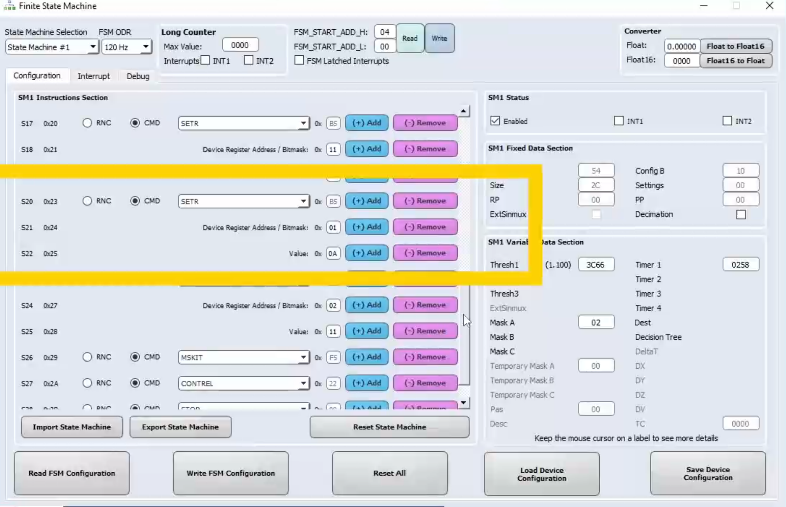

Moreover, we need to reset the program by using the CONTREL command. To avoid generating an interrupt, the MSKIT command is issued before the CONTREL command.

To allow the FSM writing the device registers using SETR commands (which means to enable the ASC feature), we need also the set the FSM_WR_CTRL_EN bit available in the FUNC_CFG_ACCESS register. Let us go to the Registers tab to set manually this bit to 1

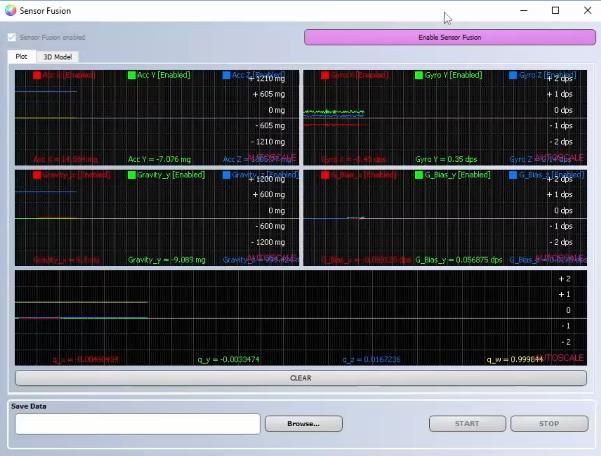

Let us open again the sensor fusion, the machine learning core and the pedometer views. During movements, we can see that the current consumption of the device is the same as before. But, when the board is stationary for more than 5 seconds, the current consumption drops significantly. An important thing to highlight is that when the board starts moving, the orientation of the teapot is not affected by the gyroscope turning-on and accelerometer power-mode change.

https://content.st.com/smart-energy-efficient-applications-with-st-intelligent-imu-webinar.html

Before starting, what do you need?

Software

The software tool that we use in this knowledge article is Unico-GUI, a graphical user interface (available for Linux, MacOS, and Windows) that supports a wide range of sensors and allows building an MLC program (even without any board connected = offline mode) and generating a sensor configuration file (UCF file).Hardware

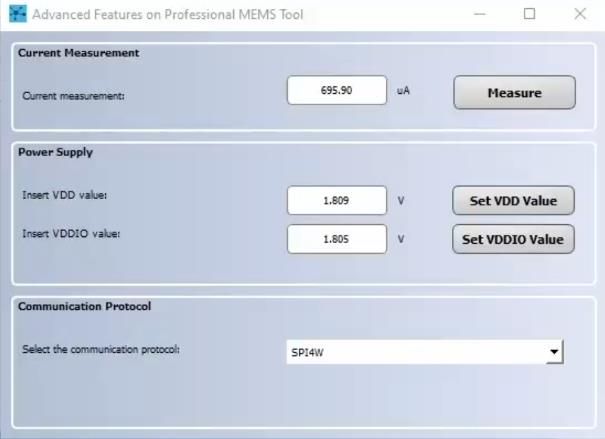

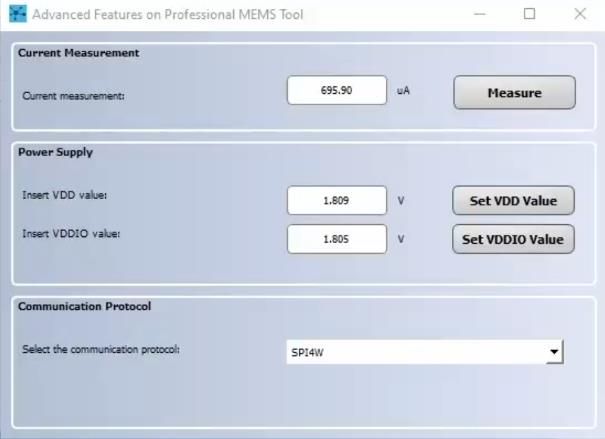

The STEVAL-MKI109V3 is used in this tutorial to configure MEMS sensors and evaluate sensor outputs. Moreover, it has some advanced features for instance:- programmable voltage power supply

- current consumption measurement

- and communication protocol, which can be changed between SPI and I²C

For more hardware details, visit:

- STMicroelectronics resource page on MEMS sensor

- AN5882 for more details on the Finite State Machine

Final goal

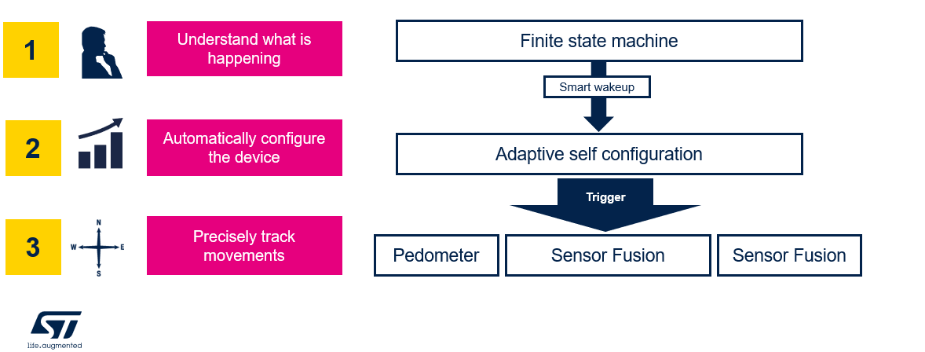

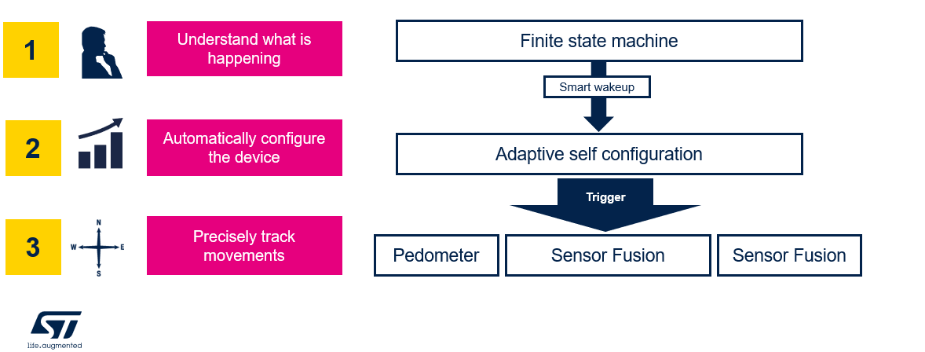

The aim of this knowledge article is to realize the below sequence of steps with Unico-GUI and the in the edge features of the LSM6DSV16X: smart wake-up, reconfigure the device to run in a high-performance mode and finally track spatial movements.

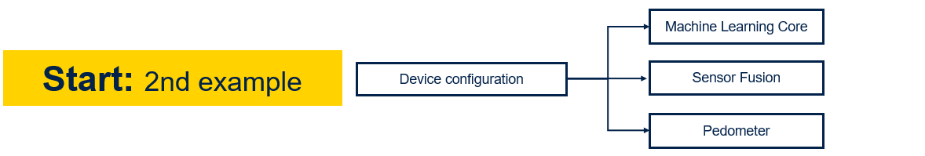

How to build the device configuration

In this knowledge article it will be seen how to implement a configuration with machine learning core, sensor fusion and the pedometer.

After opening Unico-GUI it is needed to select the device LSM6DSV16X with its adapter board, the STEVAL-MKI227KA.

Once pressed on “Select Device” button, it is possible to see on the left hand side all the in the edge functionalities of the device: FSM, MLC, Pedometer, Sensor Fusion, Qvar.

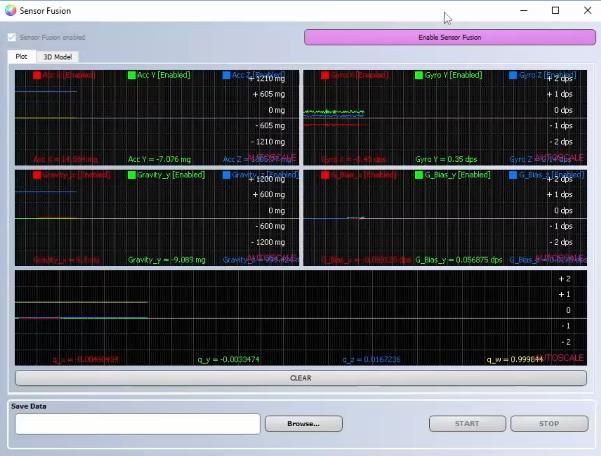

Sensor Fusion

Let us start by enabling the sensor fusion pressing on the fusion tool and then on “Enable sensor fusion” button.

To know more about the sensor fusion please refer to this knowledge article.

Machine Learning

Let us now upload the Machine Learning configuration. For this knowledge article I used one of the MLC already existing configurations present on the MEMS GitHub repository.More specifically, the configuration was the one for the gym activity recognition for the right hand.

At this link you can find the prebuilt configuration: https://github.com/STMicroelectronics/STMems_Machine_Learning_Core/tree/master/application_examples/lsm6dsv16x/gym_activity_recognition

Once downloaded the UCF file, on Unico-GUI it is needed to select the load/save bar and load the .ucf configuration.

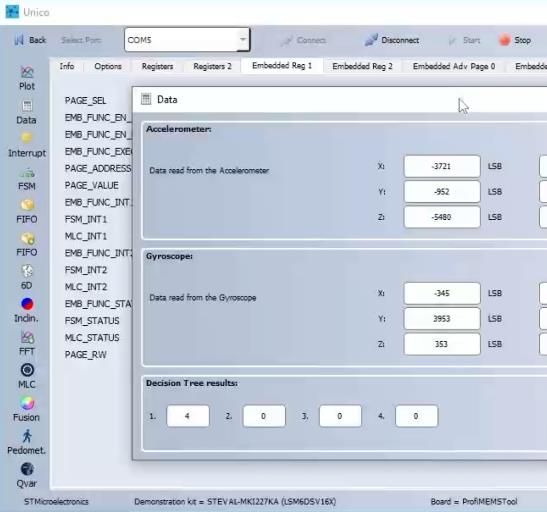

The selected configuration shows the MLC output as follow and as they are explained in the readme file in GitHub:

- 0–Steady

- 4–Biceps curls

- 8–Lateral rise

- 12–Squat

Pedometer

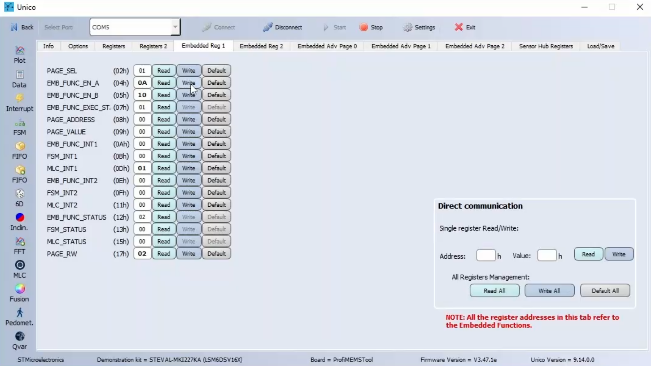

Finally, we can enable the pedometer 2.0 by setting the PEDO_EN bit available in the EMB_FUNC_EN_A register. In this case, let us go to the Embedded Reg 1 tab and write manually the value 0A in the register 04.

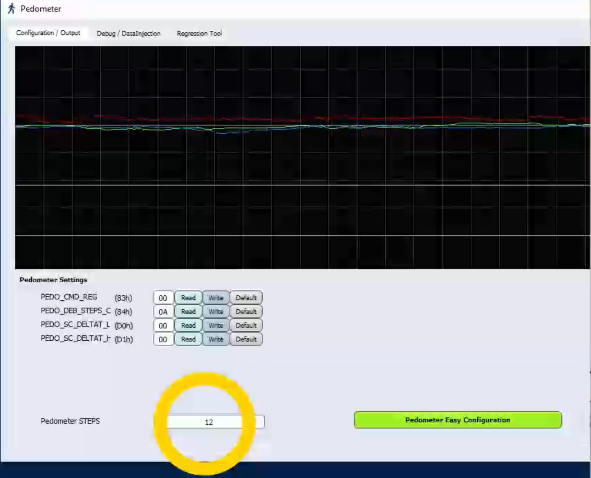

It is possible to immediately check the pedometer output by clicking on the pedometer button.

- Accelerometer:

- 8g full scale

- 120Hz ODR

- Gyroscope:

- 2000 dps full scale

- 120Hz ODR

Final flow

Now that the configuration is completed there is the need to implement the following flow leveraging the FSM tool.

After clicking on the FSM button, the FSM screen will open.

During movements, the accelerometer and gyroscope sensors are configured at 120 Hz in high-performance mode. During this condition, the MLC, the pedometer 2.0 and the SFLP are fully functional. The sensor provides Gym Activity Recognition output, the number of steps and the orientation of the device.

To enter the sleep state, the first instruction of the FSM will be a GNTH1 | TI1 condition. The configured threshold is 1.1 g applied on the accelerometer norm signal, and the timer is 600 samples (which means 5 seconds at 120 Hz). Anyway, you can refer to the AN5882 for getting more details about the FSM conditions.

The next instruction will be reached when the device is stationary for 5 seconds. When it happens, we want to turn off the gyroscope and to set the accelerometer in low-power mode. For this purpose, four consecutive SETR commands are issued, one for changing the accelerometer power mode, and one for turning off the gyroscope.

Another SETR command is used to disable the pedometer during the stationarity. The SETR command takes as first bit the argument the value 01, which means register embedded function enable A and as second argument the bite 02, which means that the pedometer is disabled while the sensor fusion is kept on.

The fourth SETR command is used to disable the MLC during stationarity. The first argument is the bite 02 and the second argument is the bite 01 which means that the MLC is disabled while the sensor fusion is kept on.

The first and the second one are needed to set the accelerometer and gyroscope back to 120Hz in high performance mode.

The third SETR command is used to enable the pedometer when the wake-up is detected, the first argument is the bite 01 and the second is the bite 0A, which means that the pedometer is wakened up together with the sensor fusion.

Finally, the last SETR command is used to enable the MLC after a smart wake-up is detected.

The first argument is bite 02 and the second is bite 11, which means that the MLC is enabled together with the sensor fusion.

Moreover, we need to reset the program by using the CONTREL command. To avoid generating an interrupt, the MSKIT command is issued before the CONTREL command.

Conclusion:

We have seen how it is possible to use the in the edge features of the LSM6DSV16X to quickly develop your application flow consisting in smart wake-up, device reconfiguration and finally spatial tracking. You can find a dedicated webinar on the topic at this link:https://content.st.com/smart-energy-efficient-applications-with-st-intelligent-imu-webinar.html

This discussion is locked. Please start a new topic to ask your question.

0 REPLIES 0

Related Content

- Can the STM32C0 replace a PIC in a low power mode application? in missing-QuestionPost

- What is the learning curve, based on your customers experience, of basic STM32 uP's, regarding HW and SW development of new boards for telecommunications applications?. Is it very steep for new developers? in missing-QuestionPost

- Is the new MP13 series good for hard real time applications? in missing-QuestionPost

- Is C0 family OK for mission critical applications? in missing-QuestionPost