- STMicroelectronics Community

- Product forums

- Edge AI

- How to use TFLite Micro Runtime with optimized ker...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to use TFLite Micro Runtime with optimized kernel from CMSIS-NN?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2021-08-17 2:50 PM

Hi everyone,

In X-CUBE-AI, it provides two different runtime, TFLite Micro and STM32Cube.AI. When I checked the used kernel by TFLite Micro, it seems that it doesn't use the optimized kernel from CMSIS-NN. For the case of STM32Cube.AI, we can't find how it implements the kernel because it is not open-source. However, we can guess it actually uses CMSIS-NN for the implementation because its latency is much shorter than TFLite Micro.

My question is: does anyone know how to let TFLite Micro use CMSIS-NN library when using X-CUBE-AI package to generate related C-files?

Thank you very much for your assistance and reply.

Best regards,

Jeff

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2021-08-18 12:06 AM

Hi Jeff,

The TFlite Micro runtime support in X-CUBE-AI pack is a facility to generate a full STM32 IDE project (STM32CubeIDE, Keil, IAR) including the sources of the TFLm interpreter (see C:\Users\<user_name>\STM32Cube\Repository\Packs\STMicroelectronics\X-CUBE-AI\7.0.0\Documentation\tflite_micro_support.html).

In X-CUBE-AI, TFLm sources are imported from the official TensorFlow repo v2.5 (GitHub - tensorflow/tensorflow at v2.5.0) w/o modifications using the optimized kernels from CMSIS-NN when available (see Middlewares/tensorflow/tensorflow/lite/micro/kernels/cmsis_nn directory) otherwise default implementation is used. Thanks to CubeMX, this approach allows to have an easy deployment of a TFLite model based on a public TFLm runtime for the STM32-based boards.

For the question: this is the default mode (source files of the kernels which are not based on CMSIS-NN are not provided). Concerning the latency, X-CUBE-AI runtime library is partially based on CMSIS-NN, some critical kernels have been re-written to consider the STM32 architecture (there is also multiple optimizations about the memory usage). Anyway, results are model dependent in particular for the latency. During your evaluation, as the TFLm runtime is provided as source files, despite a certain vigilance on our side, can you check that the compilation options are correctly defined (optimization for speed) in the generated build system.

br,

Jean-Michel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2021-08-18 11:55 AM

Hi Jean-Michel,

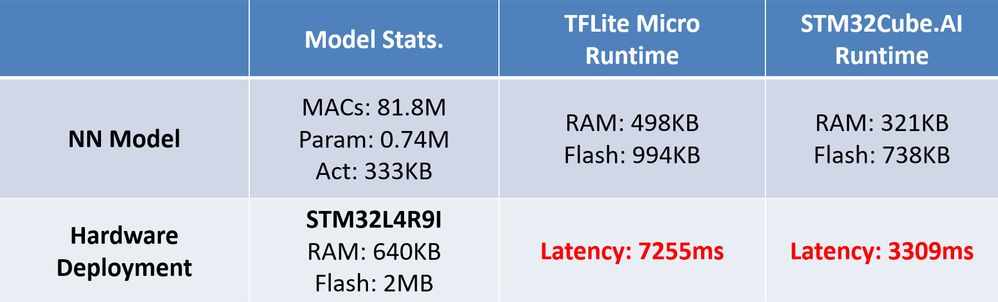

Thank you for your reply. The following is the comparison between TFLite Micro and Cube.AI runtime based on the same model:

And I also check the kernel files that TFLite Micro is using, it seems that it uses the kernel under the directory of Middlewares\tensorflow\tensorflow\lite\micro\kernels\ (I am not quite sure whether I am correct) instead of the optimized kernel in Middlewares\tensorflow\third_party\cmsis\CMSIS\NN\Source\. The implementation of kernels between these two folders is quite different.

When generating projects In Tensorflow Lite Micro, it can use TAGS=<subdirectory_name> to use optimized kernels [1]. Does TFLite Micro runtime in X-CUBE-AI automatically use the optimized kernel to generate the C-files? Or we can set it manually?

And how can I set the compilation options for speed optimization? Thank you for your help.

[1] https://www.tensorflow.org/lite/microcontrollers/library

Best regards,

Jeff

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2021-08-18 1:41 PM

Hi Jeff,

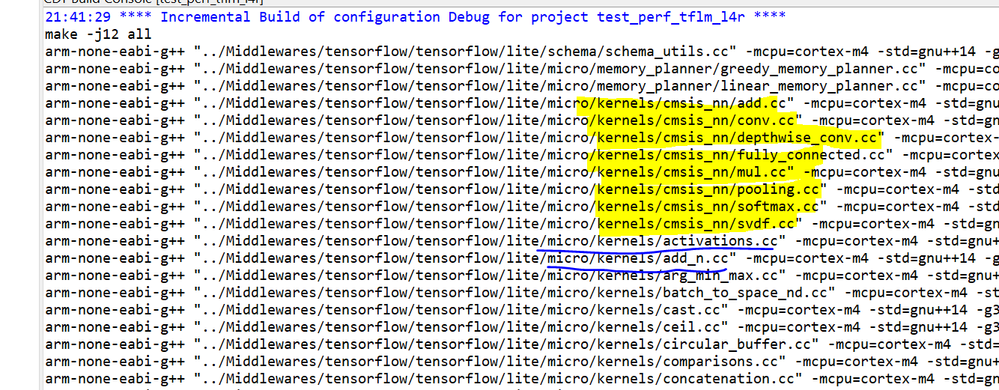

Thanks to share with us the results of your experimentation. As mentioned previously, all TFL operators are not based on CMSIS-NN, following snapshot (based on the log of a STM32CudeIDE project) shows the kernels which are CMSIS-NN based (highlighted in yellow) else "default' implementation is used. The generated STM32 project is equivalent to use the TAGS=cmsis_nn (now OPTIMIZED_KERNEL_DIR=<optimized_kernel_dir> is used with v2.5). The exported source tree for the sub-folder "Middlewares/tensorflow " is equivalent to the output of the TFLM script (create_tflm_tree.py)

$ cd <tensorflow_repo>

$ python tensorflow/lite/micro/tools/project_generation/create_tflm_tree.py <dest> --makefile_options "OPTIMIZED_KERNEL_DIR=cmsis_nn

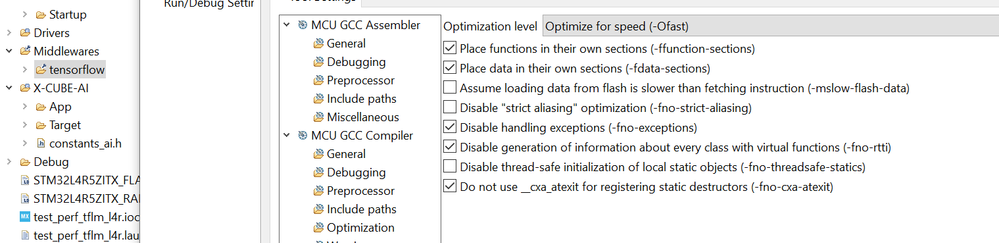

For the compilation options, you can check the setting/properties of the "tensorflow" sub-folder as follow:

Best regards,

Jean-Michel

PS: from Tensoflow repo itself, it is possible to build a static TFLM run-time library (libtensorflow-microlite.a) which can be used in your project

https://github.com/tensorflow/tensorflow/tree/r2.5/tensorflow/lite/micro/cortex_m_generic

Typical command (for CortexM7+FPU SP)

$ cd <tensorflow repo> # tag r2.5

$ make -f tensorflow/lite/micro/tools/make/Makefile TARGET=cortex_m_generic TARGET_ARCH=cortex-m7+fp OPTIMIZED_KERNEL_DIR=cmsis_nn microlite

...

arm-none-eabi-ar -r tensorflow/lite/micro/tools/make/gen/cortex_m_generic_cortex-m7+fp_default/lib/libtensorflow-microlite.a ...- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2021-08-18 8:20 PM

Hi Jean-Michel,

Thank you for your clear description. So the optimized CMSIS-NN kernels will be automatically used when the kernel has its CMSIS-NN version? Is there any method to avoid the usage of CMSIS-NN so I can compare the performance with/without CMSIS-NN?

Another question is during the compilation process, all the following files are included: ("add" can be changed to "fully connected")

Middlewares/tensorflow/third_party/cmsis/CMSIS/NN/Source/NNSupportFunctions/arm_nn_add_q7.c

Middlewares/tensorflow/tensorflow/lite/micro/kernels/cmsis_nn/add.cc

Middlewares/tensorflow/tensorflow/lite/micro/kernels/add_n.cc

Because the code in these three files are quite different, which one is actually be used for inference? Can you briefly explane the difference between these three files?

The toolchain I used is GNU Tools for STM32 (9-2020-q2-update).

The current optimization level is "optimize for size", could you also briefly explain the difference between these two settings and how the tool optimize for size or speed?

Sorry for the many problems and thank you for your assistance.

Best regards,

Jeff

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2021-09-02 5:49 AM

Hi Jeff,

+ About the files arm_nn_add_q7.c, cmsis_nn/add.cc

A small history about the CMSIS-NN and the link with Tensorflow (quantized model). Originally the supported quantization scheme by the CMSIS-NN was Qm,n format. All functions CMSIS-NN arm_nn_xx_qX are related to this scheme. Now CMSIS-NN targets the quantization scheme adopted by Google/Tensorflow: Integer format with scale/offset. For info in the previous version of X-CUBE-AI, before 6.0, both quantization scheme were supported, the provided ST quantizer (post-training quantization) should be used to quantize the Keras floating-point models with some limitations on the supported topologies and layers). As quantized tensorflow models are based on this new scheme, the "optimized" version of the kernels uses the associated CMSIS-NN implementation when available. For example, if you look the cmsis_nn/add.cc function, it calls the low level CMSIS-NN arm_elementwise_add_s8() fct if the int8 type is used and if the broadcast is not requested else fallback or "generic" implementation is used. Be aware, with this typical example, you can see that the usage of the CMSIS-NN (optimized implementation) is dependent of the needs/settings of the layer itself and the output shapes of the previous layers.

+ Naming conventions for the TFLM source files:

For each TFLite operator, there is normally one cc file by operator: add.cc for ADD operator, add_n.cc for ADD_N operator, conv.cc for CONV_2D op... Each cc implements the support for the float and int8 operators, there is a switch at the beginning of "eval" function to call the correct implementation. Consequently, in the build system, only one file for a given operator should be used (else duplicated entry point will be present). To replace the optimized implementation of ADD operator, we need to upload the add.cc from the TF repo, to copy it in the ..micro/kernels/ directory and to remove the ..micro/kernels/cmsis_nn/add.cc file from the build system. Note that ADD and ADD_NN are different TFLite operators.

+ "ADD" can be changed by "FULLY_CONNECTED" operator?

I don't sure to understand how, can you elaborate more. Anyway, for your model/environment, if you want to change a specific implementation of a given operator, it is possible to modify/patch the source fill and to provide your own implementation. API of the TFLite for micro operators is relatively simple.

Other possible solution, is to create a custom layer with your own implementation. At runtime, a TFLM service is available to register this operator to the resolver.

+ Last point for the compiler option

Have you tried the "Optimize for speed (-Ofast)" or "-O3" for the compilation of the TFLM files or for the whole project. Perhaps also w/o debug option.

br,

Jean-Michel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2025-01-05 4:48 PM - edited 2025-01-06 7:24 PM

Hi jean-michel.d,

I find this discussion is really useful for me. And recently, I'm tring to build a tflm tree projects without CMSIS_NN(Using default reference kernel). As your hint, I clone a local tensorflow 2.5 version and construct the projects with the following command:

python3 tensorflow/lite/micro/tools/project_generation/create_tflm_tree.py \

-e hello_world \

--makefile_options="TARGET=cortex_m_generic TARGET_ARCH=cortex-m7+fp" \

../tflm-tree-2.5.0

python3 tensorflow/lite/micro/tools/project_generation/create_tflm_tree.py \

-e hello_world \

--makefile_options="TARGET=cortex_m_generic TARGET_ARCH=cortex-m7+fp OPTIMIZED_KERNEL_DIR=cmsis_nn" \

../tflm-cmsisnn-2.5.0

commit a4dfb8d1a71385bd6d122e4f27f86dcebb96712d (HEAD, tag: v2.5.0)

Merge: 2107b1dc414 16b813906fc

Author: Mihai Maruseac <mihaimaruseac@google.com>

Date: Wed May 12 06:26:41 2021 -0700

Merge pull request #49124 from tensorflow/mm-cherrypick-tf-data-segfault-fix-to-r2.5

[tf.data][cherrypick] Fix snapshot segfault when using repeat and pre…