- STMicroelectronics Community

- Product forums

- Edge AI

- How to make feature scale vectors?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to make feature scale vectors?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2020-06-03 6:57 AM

Hello,I am undergraduated student in South Korea. Right now, I've ran into a little problem during my research. So I'm uploading this message becasue I believe that you guys can help sloving this probelm. I wanted my model to work in right way while using sensor tile but even though I've tried a lot of time, it didn't work properly.

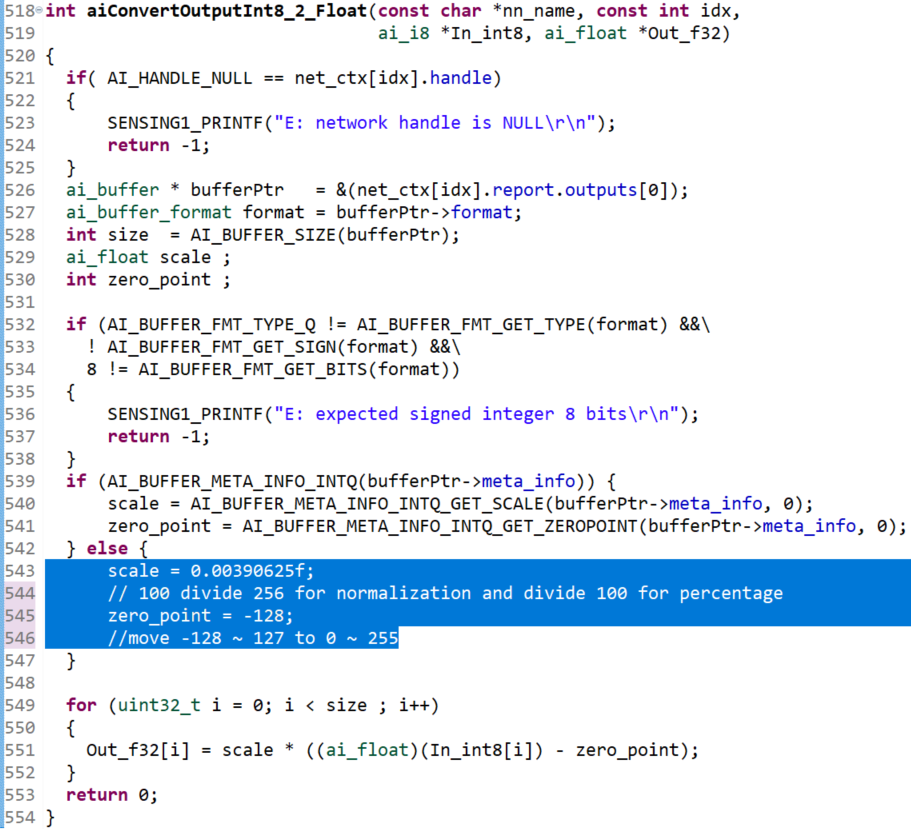

Simply speaking, I quantized my model with npz file, which needed verification. I converted quantized mdeol file through code generator (STM32 AI5.0) and changed the converted result (C,H file) with function pack file (fp-ai-sensing1). At last, code about part which needed softmax didn't work due to absense of output meta info. So like a blue part in attached photo, I arbitrarily did 'hard coding.'

I tired asc on 2 model (1.model provided by function pack 2. model I've exercised) and it showed conflicting results. In case of model provided, it worked properly when went through same progress. However, in case of my model, even though I checked that it works properly by verifying, few results were nearly fixed and also inferrence reuslt wasn't normal when executed after converting.

I think that this happened because of wrong feature scale vectors. This happened because that annotation attached is made for model provided. However, I can't find the way to apply this on my model through code generator.

I want to ask for a solution if anyone knows about this. Thank you for reading

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2020-06-03 7:15 AM

Hello,

Can you check that the imported model in the tools has the quantized outputs?

"Analyze" command allows to report the format of the inputs/outputs (including the scale/zero values)

What is tools used for the quantization? Is it through the CLI from the X-CUBE-AI pack?

br,

Jean-Michel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2020-06-03 9:13 AM

To Jean-Michel

Thank you for your quickly respond.

Here is the output report link. (https://www.notion.so/asc_generate_report-txt-2c4b1bc2bc5946839ec072e17bd05b7b)

And I used CLI from X-CUBE-AI pack 5.0.0.

But I can't find the output meta info like zero point and scale value.

I think output layer is softmax. Its layer doesn't have the weight.

So I edit the code as above.

Could you tell me what I am missing?

quantize the model

convert to c,h code

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2020-06-04 5:24 AM

Hi,

Sorry for the delay and thanks for the provided logs. This confirms my initial assumption. Your model has a softmax and in this case, the Keras post-training quantization process (stm32ai quantize command) keeps the softmax layer in float for accuracy reasons (no need to convert the output in float by the application).

If you look the generate reports (analyze, validate, generate command), you can find the info the summary (begin of the report file), including the scale/zero values (else the info is also available in the generate c-file, but this is little more tricky to find it)

..

input : quantize_input_1 [960 items, 960 B, ai_i8, scale=0.0012302960835251153, zero=127, (30, 32, 1)]

output : activation_2 [10 items, 40 B, ai_float, FLOAT32, (10,)]

...Small additional comment : In the log, I have noted that the accuracy of your original model is low, 0.035 -> 3.5% (consequently for quantized model has also a low accuracy). Your original model is correctly pre-trained? Perhaps provided representative data set is not correct?

As explained in the doc (from the X-CUBE-AI plug-in pack) , from the application, you can know the IO format (and meta-data if requested), thank to the following snippet code. In your case, for the output you must have: type == AI_BUFFER_FMT_TYPE_FLOAT for the report.outputs[0]

#include "network.h"

static ai_handle network;

{

/* Fetch run-time network descriptor. This is MANDATORY

to retrieve the meta parameters. They are NOT available

with the definition of the AI_<NAME>_IN/OUT macro.

*/

ai_network_report report;

ai_network_get_info(network, &report);

/* Set the descriptor of the first input tensor (index 0) */

const ai_buffer *input = &report.inputs[0]

/* Extract format of the tensor */

const ai_buffer_format fmt_1 = AI_BUFFER_FORMAT(input);

/* Extract the data type */

const uint32_t type = AI_BUFFER_FMT_GET_TYPE(fmt_1); /* -> AI_BUFFER_FMT_TYPE_Q */

/* Extract sign and number of bits */

const ai_size sign = AI_BUFFER_FMT_GET_SIGN(fmt_1); /* -> 1 or 0*/

const ai_size bits = AI_BUFFER_FMT_GET_BITS(fmt_1); /* -> 8 */

/* Extract scale/zero_point values (only pos=0 is currently supported, per-tensor) */

const ai_float scale = AI_BUFFER_META_INFO_INTQ_GET_SCALE(input->meta_info, 0);

const int zero_point = AI_BUFFER_META_INFO_INTQ_GET_ZEROPOINT(input->meta_info, 0);

...br,

Jean-Michel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

2020-06-04 8:36 AM

As I spend time in Korea, there seems to be a time difference. First of all, thank you for your consideration to continue to help us.

I sent you what I had tested with another verification set by mistake. Now, I made a normal model and a verification set and tested it. But that's still what I think is the problem.

There is little change in value for class 3 and 7. As you said, I changed the int type value from output. -128-127 has been changed to 0-256 with 128 added. After making the change, the range has been reduced from 256 to 100. Just for percentage.

I've only changed the model in function pack, so it's probably only about 50% performance. But I think it's a little strange.

Below is new report, model, ...etc link. Thank you!

https://www.notion.so/clearlyhunch/Report-and-Model-files-1626ee6e775146538ff99a00091e4f88

From Juwon You.

- Feature request: integer scaling for simulator rendering regardless of OS scaling in STM32 MCUs TouchGFX and GUI

- Greenhouse Temperature Controller - STM32H750BDK in STM32 MCUs Products

- timer 3 CNT not resetting upon elapsed period with an ARR of 4 in STM32 MCUs Boards and hardware tools

- Are there any plans to make responsive UI on TouchGFX? in STM32 MCUs TouchGFX and GUI

- LSM6DSOX Accelerometer Issue - Interrupt not Always Triggering on FSM condition met in Edge AI