Validation missmatch on C Model

Hey Guys,

I am currently in the process of running an object detection model on the N6. To make it accelerated on the NPU I quantize it during Training (QAT) in pytorch. I made some adjustments to the exported QAT model in onnx to make it run on the n6 NPU (I compared the original onnx output before and after my transformations and they are the same).

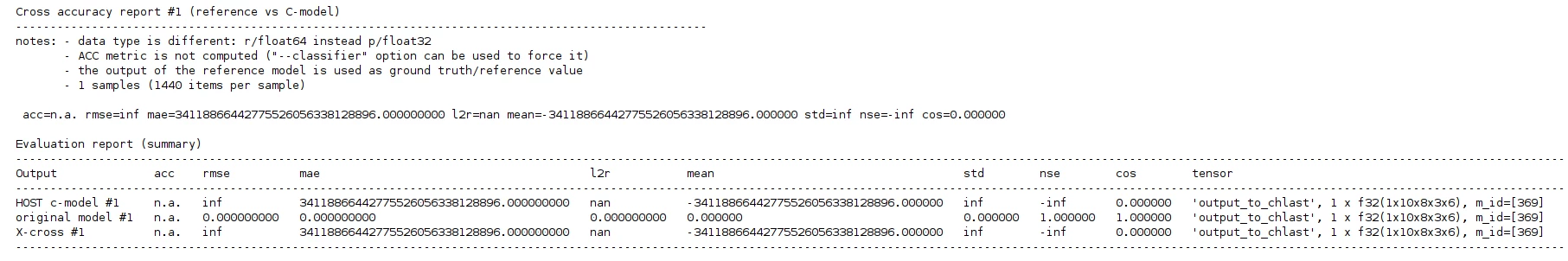

When i try to validate my Model on the Desktop (MCU) however i get completely different output for the c model:

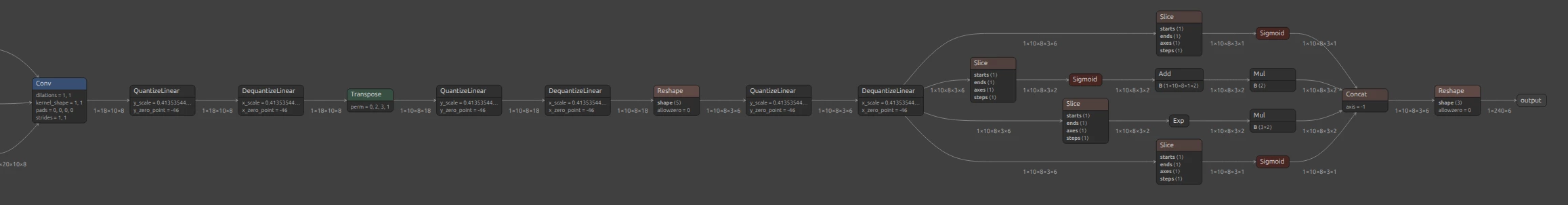

This is a part of the onnx model in netron

The input is quantized and the output not

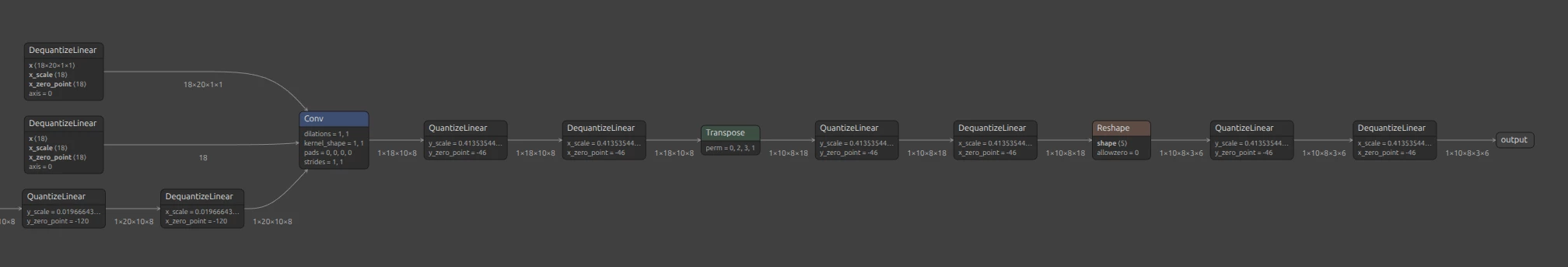

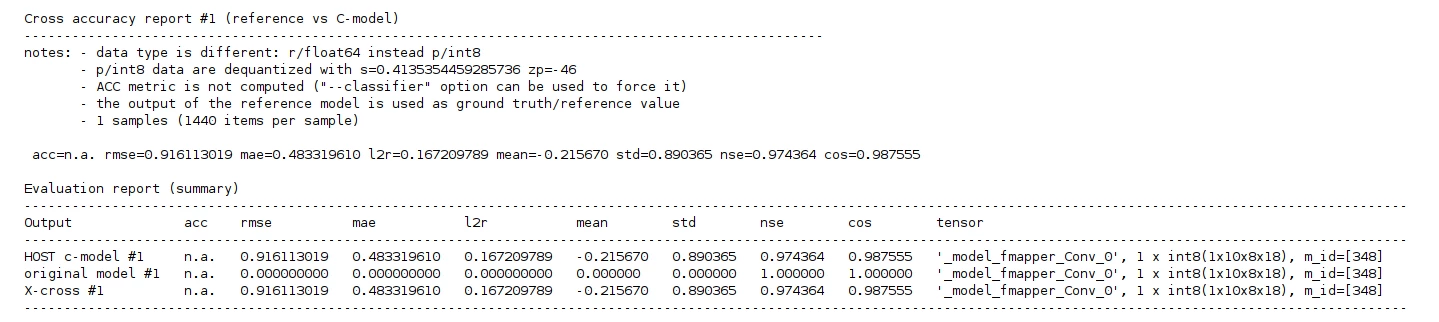

What i observed is when i remove the layer after the Conv->Transpose->Reshape the Output of the C model somehow matches the onnx model:

Why is this the case? Is there any problem with the slicing and reshape layer because St has channel last and not first?

Thanks for the help,

If you need more context let me know